Our imagination is a powerful cognitive skill. When I walked into the living room of my new apartment, I experienced a rectangular empty space with a dusty concrete floor and hollow sounding acoustics. But in my mind I was already furnishing and decorating. I imagined a blue carpet on the floor, the walls lined with bookcases, a large table on the far end, and a comfortable couch near the window.

Before moving in I drew a floor plan on a piece of paper. I also made paper cut-outs of the top view of all my furniture. Then I moved them around on the floor plan to find the best configuration. Of course, I still had to imagine what it would look like from a normal point of view. But at least I could choose between configurations that were physically possible. If I would have had the skill, I could have drawn an “artist impression” of my living room in perspective. I could see my chosen configuration in 3D: my book cases, tables, couch, and chairs (no plants).

Floor plans and perspective drawings can be considered as tests of my imagined living room. The real test came after moving in, putting everything in place, and actually living there. Of course I discovered that some things didn’t quite work as imagined. The chest of drawers for example was inconveniently blocking my path from the door to the kitchen.

We now have a new tool to guide our imagination: augmented reality. I can use the camera and the display on my phone or tablet to see a live view of my living room and additional virtual furniture. I can put a virtual chair in the view of my real room and see whether it fits. The concept of “fitting” has a twofold meaning here. Does it fit aesthetically? And does it fit metrically?

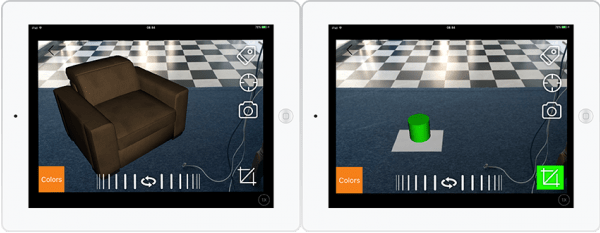

The aesthetic question can be answered quite easily because of the sophistication of 3D graphics technology. Thanks to the push from the gaming industry over the last two decades, we can now see quite realistic renderings on a mobile phone or tablet. The 3D models are so detailed that objects like couches can be represented down to the stitchings on the pillows. The image resolution and color depth is also high enough so that material qualities and lighting effects are truly convincing. There is not much left to the imagination, so to speak.

One problem that is not solved in a standardised way is the size of the virtual object. Since the image from the camera shows a perspective projection, the 3rd dimension or depth information is lost. Somehow the system needs to know how to properly scale the virtual furniture.

There are multiple technologies to measure this 3D information: stereo cameras, time-of-flight cameras, structured light cameras. The common theme of these methods is that they all need extra hardware. A cheap alternative is to place an object in the scene that has known dimensions. Some retail chains now ask you to put their catalogue on the floor. The app then detects the picture that is on the cover of the catalogue and calculates its 3D position and 3D orientation. Then it shows the furniture at the proper scale.

In the video below you can see an even cheaper alternative. The object is a sheet of A4 paper that many people have lying around (that is, if you are not in the US or Canada). This standard sized sheet has a length of 0.297 meter and a width of 0.210 meter. First you will see a virtual chair that is positioned in the corner of my living room by sliding it across the floor. Then it is rotated and subsequently scaled to a size that seems about right. Next you see a sheet of white A4 paper on the floor. Then a table appears that is scaled to the correct size. The last shot shows the table being rotated around the vertical.

Watch it now. I will discuss my own impressions below.

Feel free to watch it again if you think it went by too quickly.

First of all, I think the sophistication of 3D graphics technology is really amazing. I have to pinch myself sometimes to realise that it is running on my phone instead of the refrigerator-sized computers that I used in the 1990s.

In contrast, 3D computer vision technology is in its infancy. I think this video gives a sneak peek into the future. You may feel that using an A4 paper is kind of a cheap trick. But consider this. Look back at the picture above. That white thing on the left is a heater. What if my phone knows the dimensions of that object? What if my phone starts interpreting all the objects that are in view of the camera? What if it starts searching its database for more information about their properties? What if it will simply ask you for more information about an object it has detected? You will probably be surprised. And you will no longer need to carry around A4 paper.

My imagination is running wild now.

> You can sign up for my newsletter here.