When you look at the photo above, what sizes do you think those 5 spheres have?

If I tell you that the sheet of paper on the right is an A4. And the photo is taken in my office with the camera lying flat on the edge of my desk. Do your estimates change?

My original motivation for trying to understand 3D vision comes from scientific questions about human visual perception. How do we perceive the 3D shape and size of an object? How do we perceive the positions and attitudes of objects in our environment?

It is my longstanding ambition to answer these questions with a mathematical theory on 3D vision. The tools I have been using to formulate this theory are projective geometry, projective linear algebra, scale-space theory, and more recently rational trigonometry.

In this post I will focus on the visual prediction of the size of a sphere with the assumption that it rests on the ground plane. Another assumption is that there is a known object also resting on the ground plane, in this case a sheet of A4 paper.

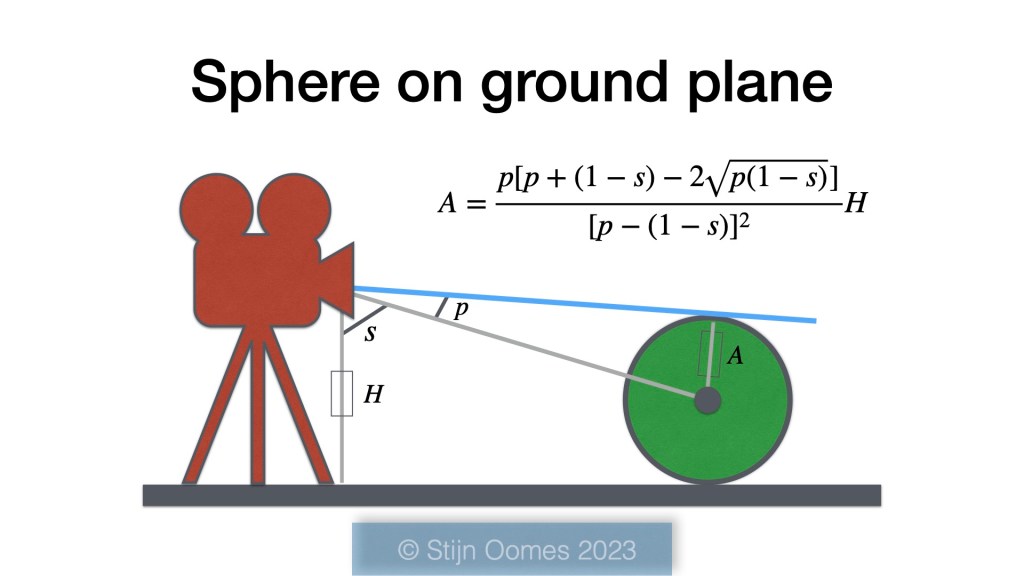

I will skip the mathematical analysis of the projection of the sphere on the image plane and the segmentation of figure from ground. Note that I am using quadrances and spreads from rational trigonometry to express the relevant variables. The visual geometry of the situation is illustrated below.

My goal is to find the size of the sphere expressed as the radius . There is a camera which has a known height from the ground plane that is denoted as

. The spread

gives the visual direction of the sphere relative to the gravitational direction (straight down). Finally, the visual semi-spread

gives a measure of the opening of the visual cone that connects the viewpoint with the surface of the sphere.

I find this formula for the radius as a function of height

, spread

, and semi-spread

very beautiful. It is not too complicated and very easy to implement in Python, or any other computer language.

Now let us put this formula to the test. How well does it predict the sizes of the spheres?

Above is a photograph taken with one of the rear cameras on my iPhone 12. The A4 and the spheres are resting on the floor. I have used the A4 to determine the height of the camera. (You can check out the formula for and its derivation here.)

I measured the diameter of the three smaller spheres with a calliper. It is not big enough to measure the diameter of the two larger spheres so in that case I just trusted the supplier of these styrofoam balls. (I admit, I should devise a method to measure their diameters properly.)

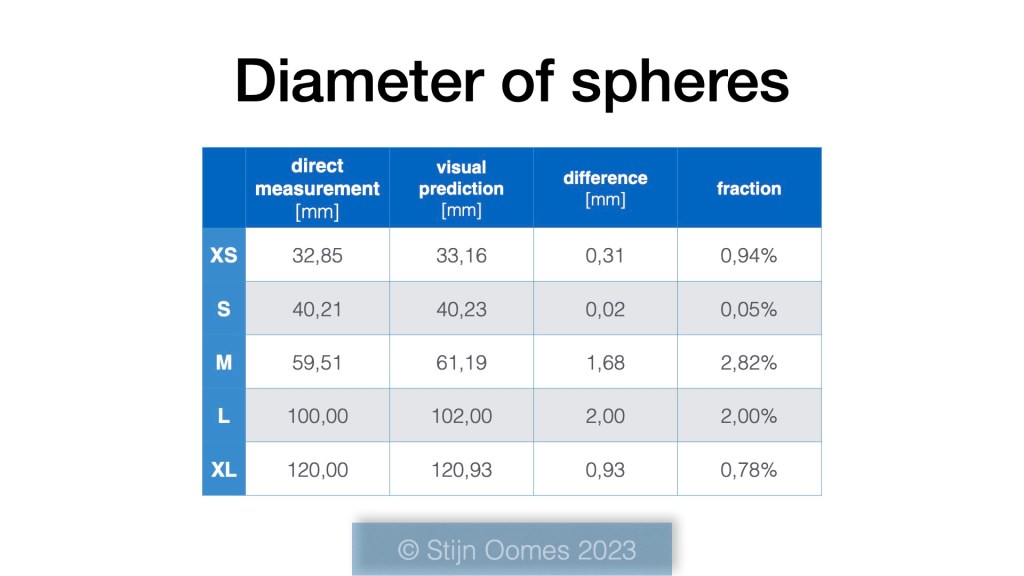

Here are the results.

The difference between the direct measurements and the visual predictions are in the range of hundreds of a millimetre to a millimetre. I am still impressed by these accuracies, even after getting this type of results for a number of years now.

The fraction in the last column is the difference divided by the direct measurement result, expressed as a percentage. It ranges from a few hundreds to a few percent. Not too bad either.

I can think of many applications that do not need this level of accuracy, for example tracking a ball in sports like soccer ⚽️ or basketball 🏀.

There might be applications where this type of accuracy is appreciated. A brightly colored sphere may in that case act as a probe or measurement gauge.

How does this compare to human perception of the size of these spheres?

There is abundant literature on the many errors that humans make in estimating all kinds of geometrical attributes. It is safe to say that the accuracy achieved here is much higher than those of humans. But in context, most humans have no problem correctly adjusting their hand for successfully picking up one of these balls. That is clearly beyond the capabilities of the system used in this experiment.