There is presently a limited number of computer vision capabilities in Apple’s impressive technology stack. The main one that comes to mind is the face detection functionality. You can use it in the Camera app on your iDevice and in the Photos app on your Mac.

Spheres in perspective

When I teach my workshop on 3D vision, the students also play with a 3D graphics framework called Scene Kit. It is very powerful and easy to use. With just a few lines of code they can create a vivid 3D scene. At some point in the tutorial, the student has created a scene with a red sphere on a grey shiny floor against a deep blue sky. Then they start playing with the controls, changing the distance, rotating the scene, or panning to the side. Invariably someone pushes the sphere to one of the corners of the image and then checks with me whether there is a mistake in the code.

Continue reading “Spheres in perspective”Detect position and orientation of faces with iOS

4 October 2017 – I updated the code for Swift 4 and iOS 11. You can find it here.

When an iPhone is processing an image from one of its cameras, the main purpose is to make it look good for us. The iPhone has no interest in interpreting the image itself. It just wants to adjust the brightness and the colours, so that we can optimally enjoy the image.

There is however one exception. The iPhone can detect whether there is a human face in the image. It is not interested in who it is, merely that there is a face present. It can even keep track of multiple faces.Continue reading “Detect position and orientation of faces with iOS”

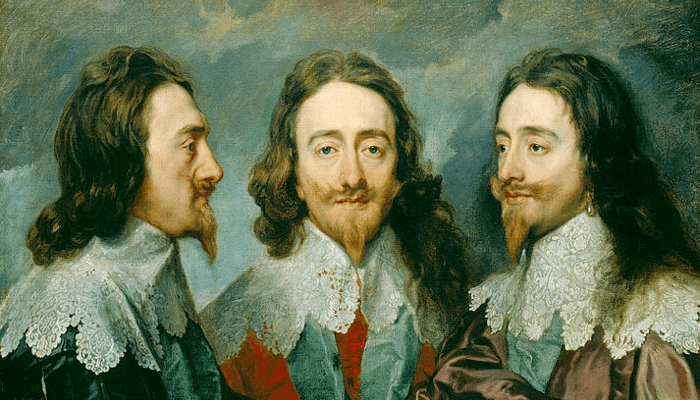

3/4 view

The three guys in the painting below are all depictions of King Charles I, painted from different viewpoints by Anthonis van Dyck in 1636. In the middle we see a frontal view (“en face”), on the left a side view (“en profile”), and the most intriguing is the one on the right: the three-quarter view (“en trois quarts”). So here is the question that has bugged me for some time. Why is it called three-quarter, or 3/4? Three quarters of what?Continue reading “3/4 view”

What can your iPhone see?

The short answer is: not much.

Well. Maybe we first have to talk about what it means to “see”. Vision is an extremely rich natural phenomenon. Most of us humans have the uncanny ability to turn light into meaning – as do many other species in the animal kingdom. Vision is mainly used for navigation and recognition. We use our eyes to detect objects in our environment and use the shapes and layout of these objects to navigate our way through life.Continue reading “What can your iPhone see?”

Switching to Swift

This week I seriously started learning Swift. Swift is a novel programming language developed by Apple to replace Objective-C. I already like it and I definitely enjoy the learning process. Some iOS developers I know are talking about how they love Swift. I am not there yet, although I have found three things that may ignite my love.

Continue reading “Switching to Swift”Distractions from user focus

In the industrial production process from idea to use, the consumer came last – almost as an after-thought. That is where the term “end-user” comes from. In the present era of smartphones and tablets and apps, an approach that focuses on the user, first and foremost, is uncontroversial.Continue reading “Distractions from user focus”

Your eye is not a camera

Although sometimes credited to the Renaissance artists and engineers, the camera obscura, or pinhole camera was already used by the Chinese in the 4th century BC and the Arabs in the 10th century AD. If you have never seen one in action, you are missing out. The images have a vibrant dreamlike quality, especially when objects in the scene are moving.

Railroad panoramas

I don’t drive. I tried to get my driver’s license several times, but I failed. The main reason is quite ironic. My control of the car was up to standards, but all the examiners stated that I lacked the perceptual abilities to safely navigate traffic. At that moment, I had spend almost half of my life studying visual perception. That knowledge apparently does not transfer to my visual skills at all.

In perspective

Being skilled in the art of drawing a convincing scene in linear perspective is no guarantee anymore for a successful career. For roughly four centuries this was a pretty good tool to have in your kit as a visual artist – from the moment that Filippo Brunelleschi gave his demonstration of a perspective rendering of the Baptistery in Florence in 1425, right up until Joseph Nicéphore Niépce took the first photograph of a view from a window in Saint-Loup-de-Varennes in 1826.